Researchers have achieved a new record for qubit fidelity in a superconducting quantum computer system, overcoming a key barrier in quantum computing.

In a study published February 27 in the journal Nature Communications, scientists from IBM, Germany’s RWTH Aachen University, and Los Angeles-based startup Quantum Elements worked to correct and suppress quantum errors, the biggest hurdle to building machines more powerful than the fastest supercomputers.

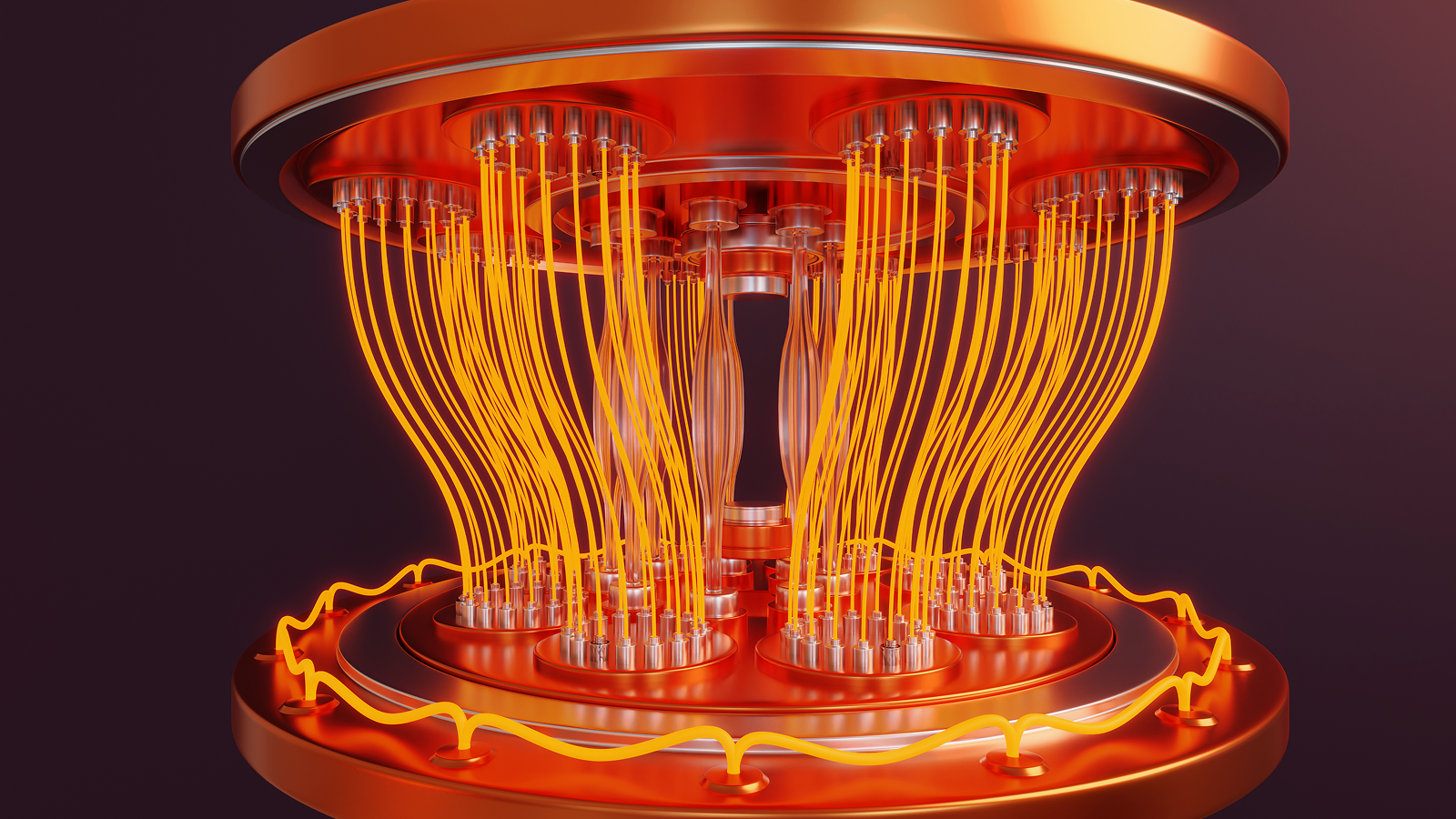

Superconducting quantum computers use quantum bits (qubits), which are equivalent to computer bits, to perform calculations. The system the researchers used (IBM’s 127-qubit Kiev and Marrakech processors) employs a combination of “physical qubits” and “logical qubits.” This is a group of entangled physical qubits that store the same information in different locations in case the physical qubit storing the information fails during a computation.

you may like

Physical qubits are embedded in the hardware layers of quantum computers as complex, geometrically precise circuits made of superconducting metals. When cooled to near absolute zero, these metals lose all electrical resistance, allowing quantum information to flow without loss of energy.

However, these qubits are inherently fragile, susceptible to small perturbations such as vibrations, local background noise, and other environmental factors. To compensate for this vulnerability, scientists group multiple physical qubits together to form logical qubits.

When computations are performed across logical qubits, the physical qubits act as parity bits that eliminate errors. But the problem inherent in this setup is that it is susceptible to “logical errors,” scientists say in a new study.

A logic error occurs when more than one physical qubit within a logical qubit succumbs to noise. Essentially, if one physical qubit fails, the other physical qubits act as fail-safes against that false signal. But if multiple qubits fail, the system treats the errors they produce as proper signals, and the computation is ruined.

Suppress errors before they occur

The 127-qubit IBM system used by the researchers is prone to a particular type of noise called “ZZ crosstalk,” produced by the particular arrangement of physical qubits.

The Quantum Elements team has developed a hybrid approach to deal with this particular type of noise. This includes suppressing crosstalk errors before they occur, thereby reducing the total number of undetectable logic errors that can occur. They combined this technique with existing error correction tools to create a new hybrid protocol.

As a result, the researchers achieved the highest fidelity quantum computations on superconducting qubits for the longest period on record – quantum computations with the lowest amount of noise.

What to read next

According to the study, the scientists previously achieved peak encoding fidelity of 79.5% on one trial and 93.7% on another, but then dropped to about 30% after about 27 microseconds.

The peak fidelity metric indicates the highest precision achieved within a quantum system, which occurs immediately after the formation of the logical qubit. The longer a quantum computer can maintain peak or near-peak fidelity, the more capable it will be to run quantum algorithms.

The team used a new technique called normalizer dynamic decoupling (NDD) to break these previous records. It achieved a peak encoding fidelity of 98.05% and maintained 84.87% fidelity after 55 microseconds.

Classical dynamic decoupling, a standard error correction technique, uses microwave pulses to force physical qubits to flip back and forth. This adjusts the qubits, usually to average out the background noise, but one physical qubit at a time.

However, there are problems when scaling up this technology. The more physical qubits in the system, the more microwave pulses are needed to suppress noise. Ultimately, the study authors explained, this introduces additional noise and adds more errors to the system, defeating its purpose.

However, rather than implementing this paradigm strictly at the hardware layer, scientists applied it to the logical qubit layer. To do this, they had to invent a way to adjust the pulses using a mathematical “normalizer” based on quantum code running on the machine itself. This allowed it to pulse in a rhythm that correlated with the machine’s chord.

As a result, normalizer dynamic decoupling provides the highest-fidelity calculations on a superconducting quantum computer to date. The longer we can maintain this level of high fidelity, the more useful we can expect quantum computers to become.

The number of quantum gates (or single quantum operations) a quantum system can perform is determined by how long it can maintain quantum fidelity. A single gate typically takes about 10-12 nanoseconds to execute. This means that approximately 4,500 to 5,500 consecutive operations can occur in 55 microseconds before the data degrades, as demonstrated in this study.

The ultimate goal of quantum computing is to create devices that can run at high fidelity for long enough to perform truly useful operations, such as running the Scholl algorithm to break codes. It is estimated that such advanced capabilities could take weeks to months for a competent quantum system to properly complete. This isn’t such a bad thing considering it could take a classical computer hundreds of trillions of years to achieve the same result.

Although the record-breaking 55-microsecond high-fidelity activity seems far from achieving practicality, it represents a significant advance over previous efforts.

Vezvaee, A., Tripathi, V., Morford-Oberst, M., Butt, F., Kasatkin, V., and Lidar, D. A. (2026). Demonstration of high-fidelity entangled logic qubits using transmons. Nature Communications. https://doi.org/10.1038/s41467-026-70011-3

Think you know everything about computers? Test your knowledge with our computer quiz!

Source link