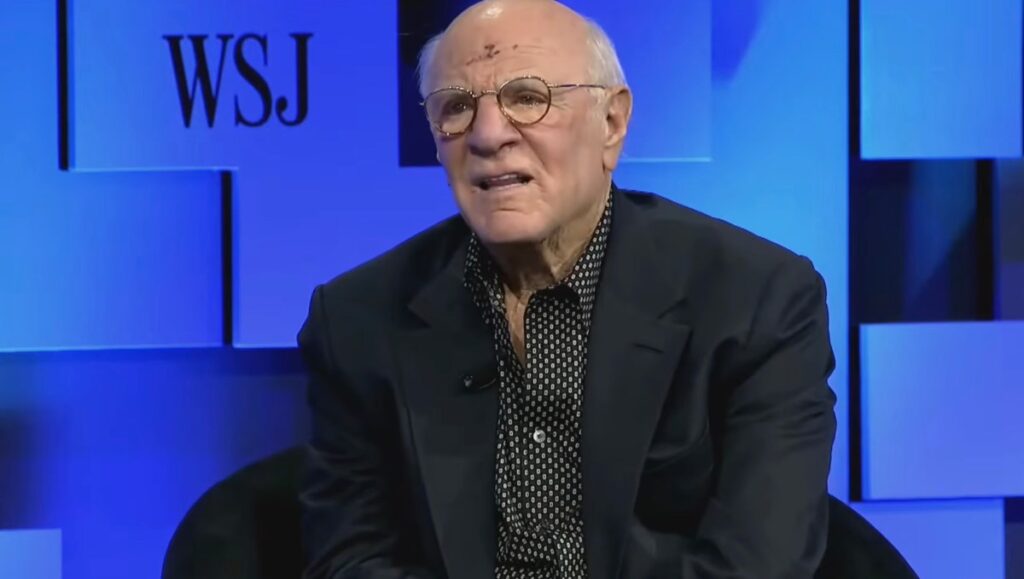

Billionaire media mogul Barry Diller doesn’t think OpenAI CEO Sam Altman can be trusted, despite recent reports to the contrary. Diller took to the stage this week at the Wall Street Journal’s Future of Everything conference to defend AI executives who have been accused by some former colleagues and board members of being manipulative and deceptive.

Diller, who is friendly with Altman, was responding to a question about whether people should trust Altman to ensure that artificial intelligence benefits humanity.

Specifically, I was asked about a theoretical form of AI known as artificial general intelligence (AGI). This AI could one day outperform humans at any task.

The media executive, who is also the co-founder of Fox Broadcasting and chairman of IAC and Expedia Group, said he believes Mr. Altman is sincere in his pursuits, but said that’s not really the concern people should be paying attention to. Rather, it is an unknown outcome brought about by AI.

“One of the big problems with AI is that it goes far beyond trust,” Diller said. “Trust may be irrelevant, because what’s happening is a surprise to the people who are making those things happen. And I’ve spent a lot of time with different people who have been in the AI creation mode, and they themselves have a sense of wonder. So… it’s the big unknown. We don’t know. They don’t know,” he explained.

“We’ve embarked on something that’s going to change just about everything. It’s not underreported. Now, I don’t really care if these huge investments come to fruition. I’m not invested in it, but I think we’ll see progress,” Diller added.

Still, the media mogul said he believes Mr. Altman is an honest and “decent person with good values,” and that he believes most of the people leading the charge are good stewards. (Diller didn’t say which AI leaders he thought were dishonest, but it’s worth noting.)

tech crunch event

San Francisco, California

|

October 13-15, 2026

“But the problem isn’t their management. The problem is … that we’re dealing with a true unknown. They don’t know what will happen when they get AGI, but we’re getting closer to it. We’re not there yet, but we’re getting closer and closer, faster and earlier. And we have to think about guardrails,” Diller said.

Additionally, Diller warned that if humans don’t think about guardrails, the alternative is “some other force, an AGI force, will do it themselves. And once that happens, once you let it go, there’s no going back.”

If you buy through links in our articles, we may earn a small commission. This does not affect editorial independence.

Source link