Judy Hill, Deputy Director of High Performance Computing at Lawrence Livermore National Laboratory (LLNL), talks about Livermore Computing’s High Performance Computing Center and the groundbreaking research being conducted there.

High Performance Computing (HPC) enables discovery and innovation through the extraordinary simulations it enables. HPC is now high on the list of U.S. priorities to harness its potential to save energy, reduce emissions, increase competitiveness, and strengthen the nation’s position as a global technology leader. At U.S. Department of Energy (DOE) facilities such as Lawrence Livermore National Laboratory (LLNL), HPC has become the “third pillar” of research, bringing together theory and experiment as equal partners.

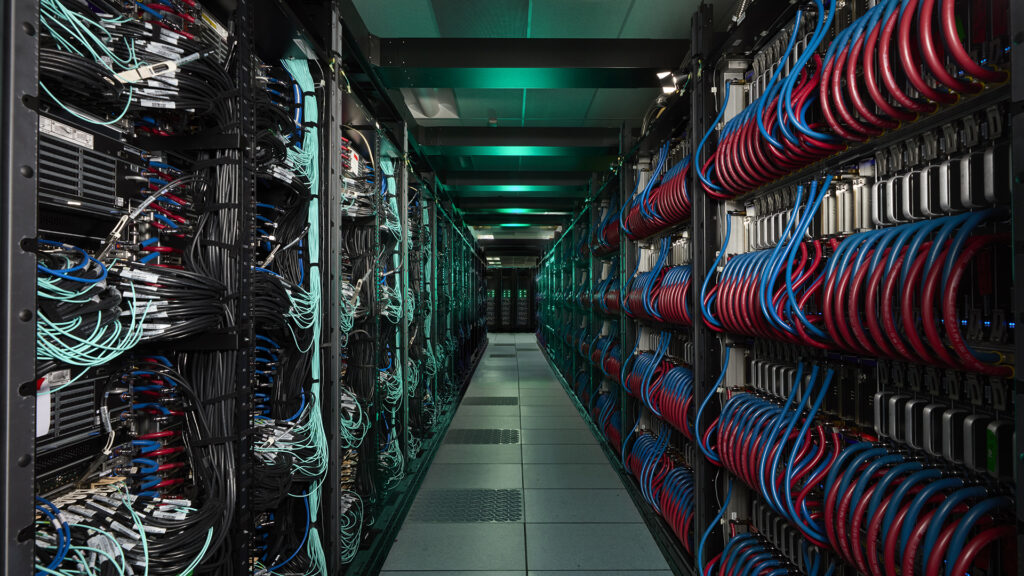

Livermore Computing, LLNL’s premier HPC center, provides the systems, tools, and expertise that support advancements in HPC capabilities. The center’s mission is threefold.

To learn more about the work being done at Livermore Computing and the potential it has for a wide range of real-world applications, The Innovation Platform spoke with Judy Hill, LLNL’s Deputy Director of High Performance Computing.

Can you briefly explain how LLNL contributes to the HPC environment in the U.S. and around the world?

LLNL has been a leader in HPC for decades since the institute’s founding. The Institute’s first major computer purchase was UNIVAC 1 in 1953, and computing has been central to our mission ever since. That tradition continues today, and we continue to push the boundaries in some important ways.

First, there’s the hardware itself. We recently brought online El Capitan, the world’s fastest benchmarked supercomputer, delivering nearly 2 exaflops (2 quintillion calculations per second) of compute power, achieving exascale performance. Prior to that, he operated Sierra and Lassen, systems essential to everything from weapons simulation to materials science discovery to advanced artificial intelligence (AI) and machine learning research. These are not just great machines, they are tools that allow us to tackle previously unsolvable problems.

To bring these advanced supercomputers to life, we work closely with companies such as HPE, AMD, NVIDIA, and Intel in co-design partnerships. We collaboratively design these systems to meet the specific needs of your application. For example, El Capitan’s AMD MI300A is an accelerated processing unit (APU) that uses shared memory to combine central processing unit (CPU) and graphics processing unit (GPU) functionality on one package. This design came from direct input around workloads that required tight integration of general-purpose computing CPUs and accelerators such as GPUs, but without the bottlenecks of separate chips.

Another important part of our impact comes through open source software development. We’ve released dozens of projects on GitHub that the broader HPC community relies on, including Spack for package management and Flux for workload management. Once you solve a system problem, share the solution so others can benefit. This is the foundation of how we contribute to the broader HPC ecosystem.

Finally, we invest in our people. We train the next generation of computational scientists through summer programs and educational initiatives, and we share what we learn with the broader community. It’s all part of keeping America at the forefront of computing while ensuring we solve important problems.

What are Livermore Computing’s current main priorities and how do these fit with national and international goals for HPC?

Our priorities span both short-term operational challenges and long-term strategic initiatives. In the short term, we’re focused on helping users use El Capitan efficiently. This includes not only optimizing your application code, but also allowing you to integrate AI capabilities into your workflows. Looking further ahead, we believe we are on the precipice of fundamental change in scientific computing and HPC. In the future, we believe that users will expect to interact with HPC environments in a manner similar to cloud environments, rather than the monolithic, batch-scheduled systems of the past.

Efficient operation and utilization of El Capitan

Although El Capitan is live, our main priority is to continue optimizing its performance and helping applications take full advantage of its features. This means adjusting critical code for the MI300A architecture and developing a memory model that efficiently leverages the unique APU memory design to maximize system utilization and reliability.

Integrating AI and machine learning

AI is everywhere, and Livermore Computing is no exception. As part of the Department of Energy’s Genesis mission, we are rapidly enabling AI capabilities to improve the productivity of application developers when creating software for El Capitan and other systems. We are also using machine learning models to speed up physics simulations, developing surrogate models that can replace expensive computation, and applying AI to data analysis from experiments and simulations.

Evolving into the HPC center of the future

We’re also focused on what’s next for HPC. In terms of system architecture, we are already working with technology vendors on the next generation of memory subsystems and interconnects that will be critical to future supercomputers. We continue to have an active dialogue with chip designers to highlight the features they need for their applications as they consider future CPU, GPU, and other accelerator designs. But the real transformation may be the convergence of cloud methodologies and the HPC ecosystem. Users don’t necessarily care which system solves their problem, they only care that they get the correct answer in a known timescale. We are working to transform the HPC ecosystem to become more cloud-like and improve the overall experience for end users.

What are the main challenges threatening HPC innovation and how can these be overcome?

The HPC landscape is rapidly evolving, presenting both exciting opportunities and significant challenges that will shape the future. HPC has historically overcome barriers by leveraging broader industry trends, such as the move from vector processors to commodity clusters, the rise of GPU acceleration, and now integration with cloud computing. This ability to adapt and innovate will remain a core strength in navigating the challenges ahead.

AI revolution and resource competition

The explosive growth of AI and machine learning presents both opportunities and threats to traditional HPC. While AI technologies can enhance modeling and simulation through surrogate models and improved data analysis, massive commercial investments in AI are reshaping the computing environment in ways that may not align with our (HPC) needs. GPU manufacturers are increasingly optimizing their products for AI training and inference workloads that can take advantage of lower-precision arithmetic, rather than traditional scientific and technical computing, which often requires double-precision floating-point performance. Huge demand for AI computing is also driving up costs and creating supply constraints for high-end accelerators. This is a case where HPC needs to adapt to broader industry trends by considering the opportunities that mixed-precision algorithms can bring and expanding the conversation and use cases to “HPC and AI” rather than “HPC or AI.”

Paradigm shift in cloud computing

Although cloud computing has many benefits, it also presents challenges when integrating with traditional HPC. The economics of cloud computing are not always favorable for continuous, high-utilization workloads, which are typical of most HPC simulation campaigns. There are also cultural and workflow differences between traditional HPC users and cloud-native approaches. The HPC center of the future we envision is a hybrid model that combines the best of both worlds. You may have on-premises infrastructure with cloud-like interfaces and features, or selective use of commercial clouds depending on the appropriate workload. At LLNL, we recognize that the future of HPC will likely involve a variety of computing resources rather than a single monolithic system, and we are actively working on this transformation.

Employee challenges

The HPC ecosystem is becoming increasingly complex, and there is a critical shortage of talent with the necessary specialized skills. The future of modeling and simulation includes not only traditional HPC expertise, but also deep knowledge of AI/ML, advanced programming models, and new architectures. Universities are not producing enough graduates with these skills, and competition for people with this breadth of knowledge is fierce. We need to invest in education and training programs that develop the next generation of HPC professionals. They should also attract individuals to those programs by highlighting important scientific problems that can only be solved with HPC resources.

What are some of the key discoveries and innovations facilitated or supported by LLNL’s HPC capabilities?

LLNL’s HPC capabilities have led to groundbreaking advances both in the field of national security missions and more broadly in a variety of scientific fields, from materials science to nuclear fusion to astrophysics. Examples include:

Stockpile modernization

As part of NNSA’s core nuclear security mission, LLNL contributes to the modernization of the nuclear stockpile by ensuring its safety, security, and reliability without resorting to underground nuclear testing. HPC enables complex, high-fidelity, multiphysics simulations of weapons performance, aging, and materials under extreme conditions, modeling everything from microsecond nuclear reactions to decades of degradation. Systems like El Capitan provide higher fidelity simulations that improve stockpile assessments.

Inertial confinement fusion and the National Ignition Facility

LLNL achieved a historic breakthrough in December 2022 when the National Ignition Facility demonstrated fusion ignition, producing more energy from fusion than the laser energy delivered to the target. Achieving this accomplishment required decades of HPC-powered research to model the complex physics of laser-driven fusion, including radiation transport, fluid dynamics, and plasma physics. HPC will continue to play an important role in designing NIF targets, optimizing laser pulse shapes, and understanding experimental results. Most recently, the El Capitan Inertial Confinement (ICECap) project has developed an AI-driven workflow built on extensive multiphysics simulations to optimize the design of the ignition capsules and cavities that make up fusion targets.

New coronavirus, GUIDE, computational biology

During the COVID-19 pandemic, LLNL rapidly pivoted HPC resources to support the national response. Scientists used LLNL HPC machines to screen millions of potential antibody variants against SARS-CoV-2 proteins and identify promising therapeutic candidates. This AI and HPC workflow allowed researchers to narrow down that vast space to the top 376 computational designs for experimental testing, dramatically accelerating the redesign process compared to previous lab-only discovery. This work continues to evolve into projects like GUIDE, where researchers demonstrate how HPC+AI can help redesign and restore efficacy to antibody therapeutics whose ability to fight viruses has been compromised by virus evolution.

This article will also be published in the quarterly magazine issue 25.

Source link