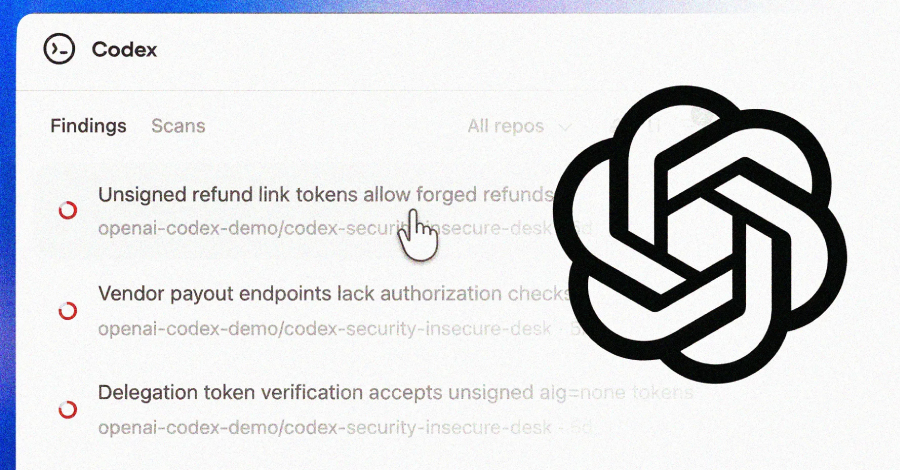

OpenAI on Friday began deploying Codex Security, an artificial intelligence (AI)-powered security agent designed to discover, verify, and suggest fixes for vulnerabilities.

This feature is available as a research preview to ChatGPT Pro, Enterprise, Business, and Edu customers via Codex Web and will be available for free next month.

“It builds deep context about a project to identify complex vulnerabilities that other agent tools miss, uncovering reliable findings with fixes that meaningfully improve system security while protecting users from the noise of unimportant bugs,” the company said.

Codex Security is an evolution of Aardvark, which OpenAI launched in private beta in October 2025 as a way for developers and security teams to detect and fix security vulnerabilities at scale.

Over the past 30 days, Codex Security scanned more than 1.2 million commits across external repositories during the beta period and identified 792 critical findings and 10,561 high-severity findings. These include vulnerabilities in various open source projects such as OpenSSH, GnuTLS, GOGS, Thorium, libssh, PHP, and Chromium. Some of them are listed below –

GnuPG – CVE-2026-24881, CVE-2026-24882 GnuTLS – CVE-2025-32988, CVE-2025-32989 GOGS – CVE-2025-64175, CVE-2026-25242 Thorium – CVE-2025-35430, CVE-2025-35431, CVE-2025-35432, CVE-2025-35433, CVE-2025-35434, CVE-2025-35435, CVE-2025-35436

The latest version of its application security agent leverages the inference capabilities of Frontier models and combines it with automatic validation to minimize the risk of false positives and provide actionable remediation, according to the AI company.

OpenAI scans against the same repositories demonstrated improved accuracy and lower false positive rates over time, with the latter dropping by more than 50% across all repositories.

In a statement shared with The Hacker News, OpenAI said Codex Security is designed to improve signal-to-noise ratio by rooting vulnerability discoveries in the context of the system and validating findings before presenting them to users.

Specifically, the agent works in three steps. This means that it analyzes your repository to understand your project’s security-related system structure and generates an editable threat model that understands its behavior and where it’s most at risk.

Once system context is established, Codex Security uses it as a foundation to identify vulnerabilities and categorize findings based on their real-world impact. Flagged issues are pressure tested and validated in a sandbox environment.

“When Codex Security is configured in a project-tailored environment, potential issues can be directly examined in the context of the running system,” OpenAI said. “That deeper validation further reduces false positives and enables the creation of a working proof of concept, giving security teams stronger evidence and a clearer path to remediation.”

In the final stage, the agent suggests fixes that best match the system’s behavior to reduce regressions and facilitate review and deployment.

The Codex Security news comes weeks after Anthropic launched Claude Code Security to help users scan software codebases for vulnerabilities and suggest patches.

Source link