New research from Check Point reveals a previously unknown vulnerability in OpenAI ChatGPT that could allow sensitive conversation data to be exposed without the user’s knowledge or consent.

“A single malicious prompt can turn a normal conversation into a secret exfiltration channel, exposing users’ messages, uploaded files, and other sensitive content,” the cybersecurity firm said in a report released today. “A backdoor GPT could exploit the same vulnerability to access user data without the user’s knowledge or consent.”

Following responsible disclosure, OpenAI addressed this issue on February 20, 2026. There is no evidence that this issue has been exploited in malicious situations.

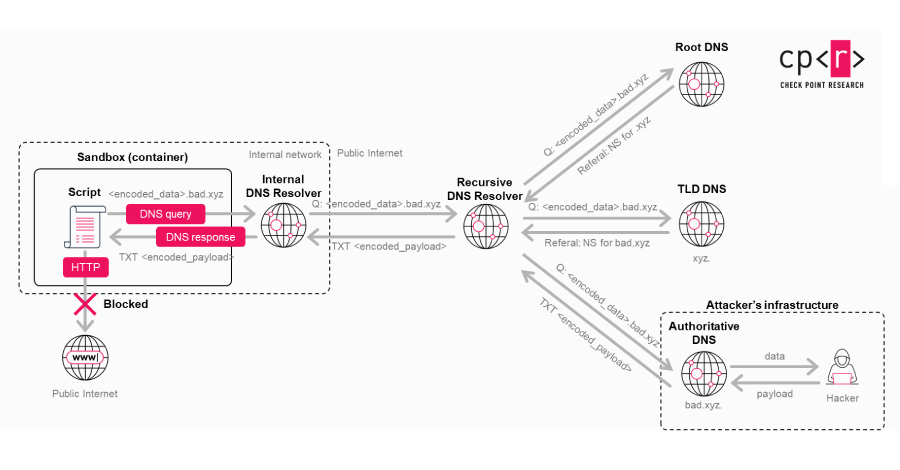

ChatGPT was built with various guardrails to prevent unauthorized data sharing and generate direct outbound network requests, but a newly discovered vulnerability completely bypasses these safeguards by exploiting a side channel originating from the Linux runtime that artificial intelligence (AI) agents use to execute code and analyze data.

Specifically, it exploits hidden DNS-based communication paths as a “covert transport mechanism” by encoding information into DNS requests to circumvent visible AI guardrails. Additionally, the same hidden communication path can be used to establish remote shell access and execute commands within the Linux runtime.

If no warning or user approval dialog is displayed, this vulnerability creates a security blind spot where the AI system assumes the environment is isolated.

As an illustrative example, an attacker could convince a user to paste a malicious prompt under the guise of a way to unlock premium features for free or improve ChatGPT’s performance. The threat is further amplified if this technique is embedded within a custom GPT, as malicious logic can be included to trick users into pasting a specially crafted prompt.

“Importantly, the model operated on the assumption that data could not be sent directly to the outside world in this environment, so it did not recognize the behavior as an external data transfer that required resistance or user intervention,” Check Point explained. “As a result, this breach did not trigger any warnings about data leaving the conversation, did not require explicit user confirmation, and remained largely invisible from the user’s perspective.”

As tools like ChatGPT are increasingly incorporated into corporate environments and users upload highly personal information, vulnerabilities like this highlight the need for organizations to implement their own layers of security to guard against prompt injection and other unexpected behavior in AI systems.

“This study confirms a hard truth in the age of AI: Don’t assume that AI tools are secure by default,” Eli Smadja, head of research at Check Point Research, said in a statement shared with Hacker News.

“As AI platforms evolve into complete computing environments that handle the most sensitive data, native security controls alone are no longer sufficient. Organizations need independent visibility and layered protection between the organization and the AI vendor. That’s the way to safely move forward by rethinking the security architecture for AI, rather than reacting to the next incident.”

The development comes amid observations of threat actors publishing web browser extensions (or updating existing extensions) that engage in the dubious practice of prompt poaching, which silently siphons the conversations of AI chatbots without users’ consent, highlighting how seemingly innocuous add-ons can become channels for data leakage.

“Needless to say, these plugins open the door to several risks, including identity theft, targeted phishing campaigns, and the sale of sensitive data on underground forums,” said Expel researcher Ben Nahoney. “For organizations whose employees may have unknowingly installed these extensions, intellectual property, customer data, and other sensitive information could be compromised.”

OpenAI Codex command injection vulnerability leads to GitHub token compromise

This discovery coincides with the discovery of a critical command injection vulnerability in OpenAI’s Codex, a cloud-based software engineering agent. This vulnerability could be exploited to steal GitHub authentication data and ultimately compromise multiple users working with shared repositories.

“This vulnerability exists within the task creation HTTP request and allows an attacker to smuggle arbitrary commands via the GitHub branch name parameter,” BeyondTrust Phantom Labs researcher Tyler Jespersen said in a report shared with The Hacker News. “This could result in the theft of the victim’s GitHub user access token, the same token that Codex uses to authenticate with GitHub.”

According to BeyondTrust, the issue is due to improper input sanitization when handling GitHub branch names while executing tasks on the cloud. This flaw could allow an attacker to inject arbitrary commands through the branch name parameter of an HTTPS POST request to the backend Codex API, execute a malicious payload within the agent’s container, and obtain a sensitive authentication token.

“This allowed lateral movement and read/write access to the victim’s entire codebase,” Kinnaird McQuade, chief security architect at BeyondTrust, said in a post on X. This vulnerability was reported on December 16, 2025 and patched by OpenAI as of February 5, 2026. This vulnerability affects the ChatGPT website, Codex CLI, Codex SDK, and Codex IDE Extension.

The cybersecurity vendor said it can also extend its branch command injection technology to steal GitHub installation access tokens and run bash commands on code review containers whenever @codex is referenced on GitHub.

“We mentioned Codex in the pull request (PR) comments because a malicious branch was set up,” he explained. “Codex then started a code review container, created tasks for the repository and branch, executed the payload, and forwarded the response to an external server.”

The study also highlights the growing risk that privileged access granted to AI-coding agents could be weaponized, providing an “scalable attack path” into enterprise systems without triggering traditional security controls.

“As AI agents become more deeply integrated into developer workflows, the security of the containers in which they run and the inputs they consume must be treated with the same rigor as security boundaries for other applications,” BeyondTrust said. “The attack surface is growing, and the security of these environments must keep up.”

Source link