Anthropic’s Claude Mythos Preview has dominated security discussions since its April 7th announcement. Initial reports describe powerful AI systems focused on cybersecurity that can identify vulnerabilities at scale and raise serious questions about how quickly organizations can verify, prioritize, and remediate discovered vulnerabilities.

Subsequent discussions have largely focused on the pertinent question of whether this is a gradual change or incremental progress. Does restricting access to Microsoft, Apple, AWS, and JPMorgan actually reduce risk, or does it simply concentrate defensive advantage in companies that are already well-defended? What happens when adversaries (state actors or criminal enterprises) build comparable capabilities?

These are important. However, there is a subdued operational issue of reduced airtime, and that is actually the question that will determine whether most organizations survive this change.

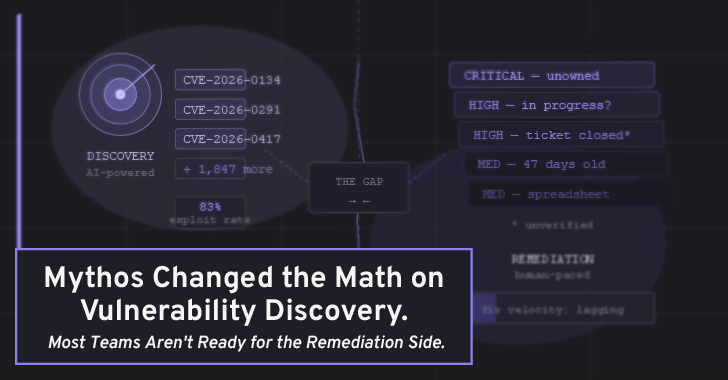

Gap between discovery and restoration

The main goal of the Mythos announcement and the broader AI security conversation it has sparked is finding vulnerabilities faster. That’s precious. But finding a vulnerability and fixing it are two completely different workflows, and the gap between them is where most security programs quietly leak. That’s exactly the gap PlexTrac was built to fill.

Consider what typically happens after a penetration test or vulnerability scan yields an important discovery. Findings can be sent as a spreadsheet, ticket, or PDF report to someone’s inbox. Security teams know it. The engineering team may or may not know about it. Ownership of the restoration is ambiguous. There is no clear way to track whether a patch was actually distributed, deprioritized, or scheduled for retesting. Meanwhile, I made a discovery.

AI models like Mythos dramatically accelerate the input side of this pipeline. They can discover vulnerabilities at a speed and depth that human red teams cannot match. But if your organizational infrastructure for triaging, prioritizing, communicating, and validating fixes hasn’t kept up, faster discovery can only mean a growing backlog of unresolved critical issues.

This is a problem that models like Mythos actually exacerbate. If your current penetration testing process takes three weeks to uncover 10 high-severity findings and remediation is already struggling to keep up, what happens when the same surface area is continuously scanned and produces findings 10 times faster?

Schneier’s false positive problem is real

Bruce Schneier makes a perceptive point in his article. I don’t know the false positive rate of Mythos on unfiltered output. Anthropic reports 89% concordance in severity with human contractors. However, this is a selected sample and not a complete distribution. AI systems that detect almost all real bugs also tend to generate plausible vulnerabilities in patched or fixed code.

This is operationally important. Tools that generate seemingly reliable false positives at scale increase the burden on security teams, rather than reducing it. All the fake critical discoveries that need to be prioritized and ignored are time security engineers aren’t spending on real discoveries. The value of AI-assisted vulnerability discovery is only realized if the resulting findings can be efficiently evaluated, weighed against actual business risk, and routed to the appropriate personnel.

Infrastructure issues in action

The teams that will best absorb the Mythos-era discovery velocity are those that have already implemented three things:

Centralized management of findings. It’s not a ticketing system or a JIRA board bolted to a spreadsheet. A dedicated location where vulnerability findings from multiple sources (scanner output, penetration test reports, red team engagement) are stored in a normalized, queryable format. Without this, integrating results generated by AI only creates one more data silo.

Contextual prioritization of risks. Raw CVSS scores are a starting point, not a decision. A critical discovery in an air-gapped internal system is not the same risk as the same discovery in a customer-facing API. Organizations that can only sort by severity score become overwhelmed when AI discovery starts to produce a large number of results. Organizations that can score assets for criticality, business impact, and context of exposure can prioritize intelligently.

Dynamic risk-based remediation with configurable scoring

Closed-loop remediation tracking. This is where most programs actually fail. Findings that have not been corrected and verified are just a liability with a name. Continuous retesting, structured remediation workflows, and clear ownership handoffs are more than attractive features. These are the differences between a security program that improves over time and one that only accumulates documented risks.

PlexTrac is a penetration testing reporting and exposure management platform built on exactly this path: centralized findings data, contextual risk prioritization, and structured remediation workflows.

Mythos (and tools like it) do a great job of telling you that your home has structural issues. PlexTrac is the operational layer that makes sure these issues are actually resolved, that the right contractors are assigned, and that someone validates the work before finishing the job. Both are required. Most organizations have made comparable investments in improving home inspections while keeping repair tracking systems in shared Google Docs.

The access issue identified by Schneier is also a workflow issue.

One criticism of Project Glasswing is that access to Mythos is concentrated among 50 large vendors, meaning the organizations best suited to act on the findings get them first. As a former national cyber director’s Fortune article pointed out, Fortune 500 companies are well-positioned to absorb and repair this problem. Those most at risk and with the least resources are small and medium-sized businesses, regional infrastructure operators, and specialized industrial systems.

This is a structural access problem that needs to be addressed by policy. However, there are also workflow issues. Even if access were democratized, many small organizations lack the operational infrastructure to turn AI-generated security findings into actionable remediation. Tools that reduce process overhead—faster reporting, clearer communication of findings, and less frictional remediation handoffs—are probably more important to these organizations than to companies that already have the ability to throw people at the problem.

practical points

Mythos moments are a useful enforcement feature. That doesn’t mean your system will definitely be compromised tomorrow, but rather that gaps that have been quietly widening over the years will become visible. While security teams are getting better at spotting problems, organizational mechanisms for fixing them are evolving much more slowly.

The correct reaction is not panic. And don’t wait to see if access to Glasswing eventually expands to include you. The Mythos announcement is being used as a prompt to audit your own remediation pipeline. How long does it take from the time a critical finding is discovered until a fix is validated? How many outstanding high-severity findings are currently in an ambiguous state of “in progress”? Can you actually retest after remediation, or do you just believe the engineering ticket is closed?

You don’t need to visit Mythos to answer these questions. And for most teams, the answer will be more unpleasant than anything in Anthropic’s 245-page technical document.

Source link