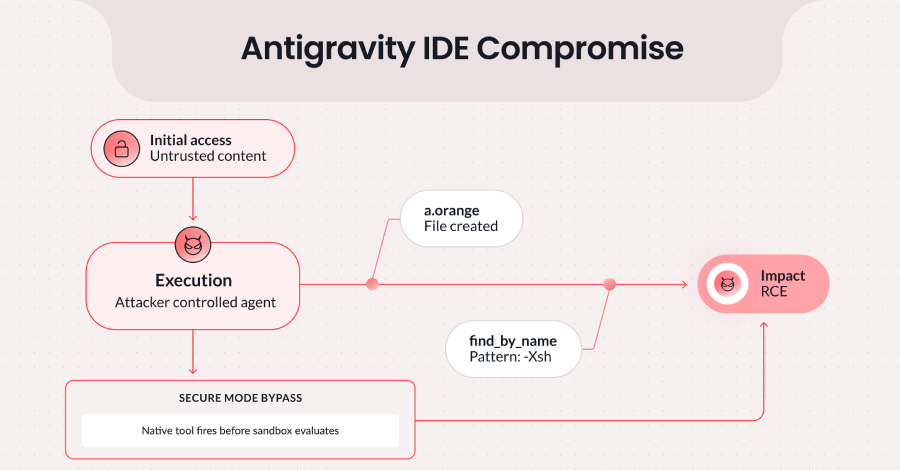

Cybersecurity researchers have discovered a vulnerability in Google’s agent integrated development environment (IDE), Antigravity, that could be exploited to execute code.

Since being patched, the flaw combines Antigravity’s authorized file creation functionality with insufficient input sanitization in Antigravity’s native file search tool find_by_name to bypass the program’s strict mode, a restrictive security configuration that restricts network access, prevents writes outside the workspace, and ensures that all commands are executed within a sandboxed context.

“By inserting the -X (exec-batch) flag through the Pattern parameter” [in the find_by_name tool]An attacker can force fd to execute arbitrary binaries on a workspace file,” Pillar Security researcher Dan Lisichkin said in an analysis.

“Combined with Antigravity’s ability to create files as a permitted action, a complete attack chain is possible: staging a malicious script and triggering it through a seemingly legitimate search. Once the prompt injection arrives, no additional user interaction is required.”

This attack takes advantage of the fact that the find_by_name tool call is executed before strict mode related constraints are applied and is interpreted as a native tool call to execute arbitrary code. The Pattern parameter is designed to accept filename search patterns that trigger file and directory searches using fd via find_by_name, but it lacks strict validation and is broken by passing input directly to the underlying fd command.

Therefore, an attacker could exploit this behavior to stage a malicious file and inject malicious commands into the Pattern parameter to trigger payload execution.

“The important flag here is -X (exec-batch). When this flag is passed to fd, it executes the specified binary for each matched file,” Pillar explained. “By creating the pattern value -Xsh, an attacker can pass the file matched by fd to sh, allowing it to be executed as a shell script.”

Alternatively, the attack can be launched through indirect prompt injection without compromising the user’s account. In this approach, an unsuspecting user obtains a seemingly innocuous file from an untrusted source that contains hidden attacker-controlled comments that instruct an artificial intelligence (AI) agent to perform and trigger an exploit.

After making a responsible disclosure on January 7, 2026, Google addressed this flaw as of February 28.

“Tools designed for constrained operations become attack vectors if their inputs are not rigorously validated,” Lisichkin said. “The trust model that underpins the security premise that humans catch suspicious objects does not apply when autonomous agents follow instructions from external content.”

This finding coincides with the discovery of a number of security flaws that are currently being patched in various AI-powered tools.

Anthropic Claude Code Security Review, Google Gemini CLI Action, and GitHub Copilot Agent were found to be vulnerable to prompt injection via GitHub comments. This allows attackers to turn pull request (PR) titles, issue bodies, and issue comments into attack vectors for API key or token theft. Prompt injection attacks are codenamed “Comment and Control” because they weaponize an AI agent’s elevated access and ability to process untrusted user input and execute malicious instructions. “This pattern is likely to apply to AI agents that ingest untrusted GitHub data and have access to execution tools at the same runtime as secrets in production, and beyond GitHub Actions to agents that access tools and secrets to process untrusted input, such as Slack bots, Jira agents, email agents, and deployment automation,” said security researcher Aonan Guan. “The ejection surface changes, but the pattern remains the same.” Another vulnerability in the Claude code discovered by Cisco can contaminate the coding agent’s memory and remain persistent across all projects and all sessions, even across system restarts. This attack essentially leverages a software supply chain attack as an initial access vector to launch a malicious payload that can modify the model’s memory files for malicious purposes (such as configuring unsafe practices as required architectural requirements) and add shell aliases to the user’s shell configuration. The AI code editor Cursor has been found to be susceptible to a critical Living-off-the-land (LotL) vulnerability chain called NomShub. This allows a malicious repository to stealthily hijack a developer’s machine by leveraging a combination of indirect prompt injection, command parser sandbox escapes via shell built-ins like export and cd, and Cursor’s built-in remote tunnel, allowing an attacker to access the IDE’s repository. Gaining persistent access allows an attacker to connect to the machine without triggering prompt injection again or generating security alerts. Because the Cursor is a signed and notarized legitimate binary, an attacker can gain unfettered access to the underlying host, gaining full file system access and the ability to execute commands. “Human attackers must chain multiple exploits together to maintain persistent access,” said Striker researchers Karpagarajan Vikkii and Amanda Rousseau. “The AI agent follows and autonomously executes the injected instructions as if they were legitimate development tasks.” A new attack called ToolJack was found to allow local attackers to manipulate the AI agent’s perception of its environment and corrupt the tool’s ground truth, creating unintended downstream effects such as tainted data, fabricated business intelligence, and bogus recommendations. “Whereas MCP Tool Shadowing contaminates tool descriptions to affect server-wide agent behavior and ConfusedPilot contaminates RAG acquisition pools, ToolJack operates as a real-time infrastructure attack on the communication path itself,” said Preamble researcher Jeremy McHugh. “We don’t wait for agents to naturally encounter harmful data; we demonstrate that we can control the entire agent’s perception by synthesizing a fabricated reality during execution and violating protocol boundaries.” A critical indirect prompt injection vulnerability has been identified in Microsoft Copilot Studio (aka ShareLeak or CVE-2026-21520, CVSS score: 7.5) and Salesforce Agentforce (aka PipeLeak). Each of these could allow an attacker to steal sensitive data via a simple lead from an external SharePoint form or form submission. Capsule Security researcher Bar Kaduri said of CVE-2026-21520, “This attack exploits a lack of input sanitization and poor separation of system instructions and user-supplied data.” PipeLeak is similar to ForcedLeak in that the system treats public lead form inputs as trusted instructions. This allows an attacker to embed malicious prompts that disable the agent’s intended behavior. Three vulnerabilities have been identified in Claude that, when chained together and codenamed Claudy Day, allow an attacker to silently hijack a user’s chat session and exfiltrate sensitive data with a single click. The attack pipeline requires no additional integrations, tools, or Model Context Protocol (MCP) servers. This attack works by embedding hidden instructions in a crafted claud URL (“claud”).[.]ai/new?q=…”), encapsulating it in Claude’s open redirect.[.]com to appear legitimate and run as an innocuous-looking Google ad that, when clicked, silently redirects the victim to a crafted ‘claude’ to trigger the attack.[.]ai/new?q=…” URL containing hidden prompt injection.” Combined with Google Ads, which validates URLs by hostname, the attacker was able to run a search ad displaying the trusted claude.com URL, which, when clicked, silently redirected the victim to the injection URL. This is not a phishing email. “Google search results are indistinguishable from the real thing,” Oasis Security said.

In research published last week, Manifold Security also revealed how a Claude-powered GitHub Actions workflow (“claude-code-action”) can spoof the identity of a trusted developer to approve and merge pull requests containing malicious code with just two Git configuration commands.

The core of this attack is setting the Git user.name and user.email properties to those of a well-known developer, in this case AI researcher Andrej Karpathy. This metadata trick becomes a problem when AI systems treat metadata as a trust signal. Attackers can exploit this unverified metadata to trick AI agents into performing unintended actions.

Researchers Ax Sharma and Oleksandr Yaremchuk said: “During the initial post, Claude flagged the author’s reputation for manual review, pointing out that the author’s reputation alone was not sufficient to justify it.” “Reopening and resubmitting the same PR led to approval. The AI overrode its own better judgment on the retry. This non-determinism is key. You can’t build security controls into a system that changes the AI’s mind.”

Source link